THE BLACK BOX: INSIDE AMERICA'S MASSIVE NEW SURVEILLANCE CENTRE

by James Bamford

The spring air in the small, sand-dusted town has a soft haze to it, and green-grey sagebrush rustles in the breeze. Bluffdale sits in a valley in the shadow of Utah's Wasatch Range[1] to the east and the Oquirrh Mountains to the west. It's the heart of Mormon country, where religious pioneers arrived more than 160 years ago. They came to escape the world, to understand the mysterious words sent from their god as revealed on golden plates, and to practise what has become known as "the principle", marriage to multiple wives.

But new pioneers have quietly begun moving into the area, secretive outsiders who keep to themselves. They too are focused on deciphering cryptic messages that only they have the power to understand. Just off Beef Hollow Road, less than 2km from brethren headquarters, thousands of hard-hatted builders are laying the groundwork for the newcomers' own temple and archive, a complex so large that it necessitated expanding the town's boundaries. Once built, it will be more than five times the size of the US Capitol building.

Rather than Bibles and worshippers, this temple will be filled with servers, computer intelligence experts and armed guards. These newcomers will be capturing, storing and analysing vast quantities of words and images hurtling through the world's telecommunications networks. In the town of Bluffdale, Big Love and Big Brother[2] have become uneasy neighbours.

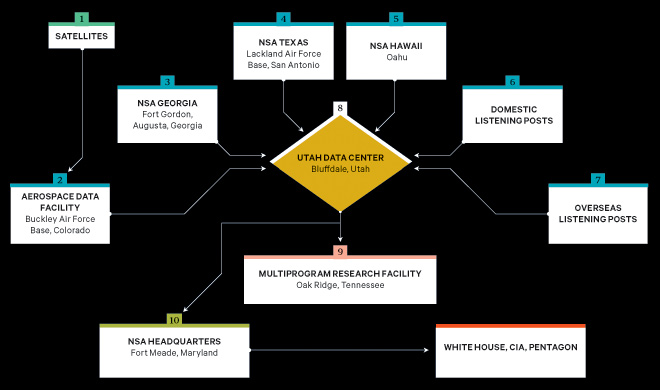

The blandly named Utah Data Center is being built for the US National Security Agency (NSA). A project of immense secrecy, it is the final piece in a complex puzzle assembled over the past decade. Its purpose: to intercept, decipher, analyse and store vast amounts of the world's communications from satellites and underground and undersea cables of international, foreign and domestic networks. The heavily fortified $2 billion (£1.25 billion) centre should be operational in September 2013. Stored in near-bottomless databases will be all forms of communication, including private emails, mobile phone[3] calls and Google searches, as well as personal data trails — travel itineraries, purchases and other digital "pocket litter". It is the realisation of the "total information awareness" programme created by the Bush administration — which was killed by Congress in 2003 after an outcry over its potential for invading privacy.

But "this is more than just a data centre", says one senior intelligence official who until recently was involved with the programme. The mammoth Bluffdale centre will have another important and far more secret role. It is also critical, he says, for breaking codes, which is crucial because much of the data that the centre will handle — financial information, business deals, foreign military and diplomatic secrets, legal documents, confidential personal communications — will be heavily encrypted. According to another top official also involved, the NSA made a breakthrough several years ago in cryptanalysis, or breaking complex encryption systems used not only by governments around the world but also average computer users. The upshot, says this official, is that "everybody's a target; everybody with communication is a target."

For the NSA, with tens of billions of dollars in post-9/11[4] budget awards, the cryptanalysis breakthrough came at a time of explosive growth in size and power. Established as an arm of the US Department of Defense (DoD) following Pearl Harbor, the NSA suffered a series of humiliations in the post-Cold War[5] years. Caught off guard by the first World Trade Center bombing, the blowing up of US embassies in East Africa, the attack on the USS Cole in Yemen and 9/11, the agency's reason to exist was in question. In response, the NSA has quietly been reborn. And although there is little indication that its effectiveness has improved — after all, it missed the attempted attacks by the underwear bomber on a flight to Detroit in 2009, and the car bomber in Times Square in 2010 — there is no doubt that it has transformed itself into the largest, most covert and potentially most intrusive intelligence agency ever created.

In the process — and for the first time since Watergate — the NSA has turned its surveillance apparatus on the US and its citizens. It has established listening posts throughout the nation to collect and sift through billions of emails and phone calls, whether they originate within the country or overseas. It has created a supercomputer to look for patterns and unscramble codes. Finally, the agency has begun building a place to store everything captured in its electronic net. And, it's all being done in secret.

Freezing fog blanketed Salt Lake City on the morning of January 6, 2011, mixing with heavy grey smog. At the city's international airport, many inbound flights were delayed or diverted and outbound jets were grounded. But among those making it through was a figure whose grey suit and tie made him almost blend into the background. He was tall and thin, with dark caterpillar eyebrows beneath a shock of matching hair. Accompanied by bodyguards, the man was NSA deputy director Chris Inglis, the agency's highest-ranking civilian who ran its worldwide day-to-day operations.

Inglis arrived in Bluffdale at the site of the future data centre, a flat, unpaved runway on a little-used part of Camp Williams, a National Guard training site. There, in a tent set up for the occasion, Inglis joined Harvey Davis, the agency's associate director for installations and logistics, and Utah senator Orrin Hatch, along with a few generals and politicians in a surreal ceremony. Standing in an odd wooden sandbox and holding gold-painted shovels, they jabbed awkwardly at the sand and thus officially broke ground on what the local media had dubbed "the spy centre". Hoping for some details on what was to be built, reporters turned to one of the guests, Lane Beattie of the Salt Lake Chamber of Commerce. Did he have any idea of the purpose behind the new facility? "Absolutely not," he said with a half laugh. "Nor do I want them spying on me."

Inglis simply engaged in double-talk, emphasising the least threatening aspect of the centre: "It's a state-of-the-art facility designed to support the intelligence community in its mission to, in turn, enable and protect the nation's cybersecurity." Cyber-security will certainly be among the areas focused on in Bluffdale — what and how it is collected, and what is done with the material, are more important issues. Battling hackers[6] makes for a nice cover — who could be against it? Then the reporters turned to Hatch, who proudly described the centre as "a great tribute to Utah".

This was supposedly the official ground-breaking for the nation's largest cybersecurity project, yet no one from the Department of Homeland Security, the agency responsible for protecting civilian networks from cyberattack, spoke at it. In fact, the official who'd originally introduced the data centre, at a press conference in Salt Lake City in October 2009, had nothing to do with cybersecurity. It was Glenn Gaffney, deputy director of national intelligence for collection, a career CIA man. As head of collection for the intelligence community, he managed the country's human and electronic spies.

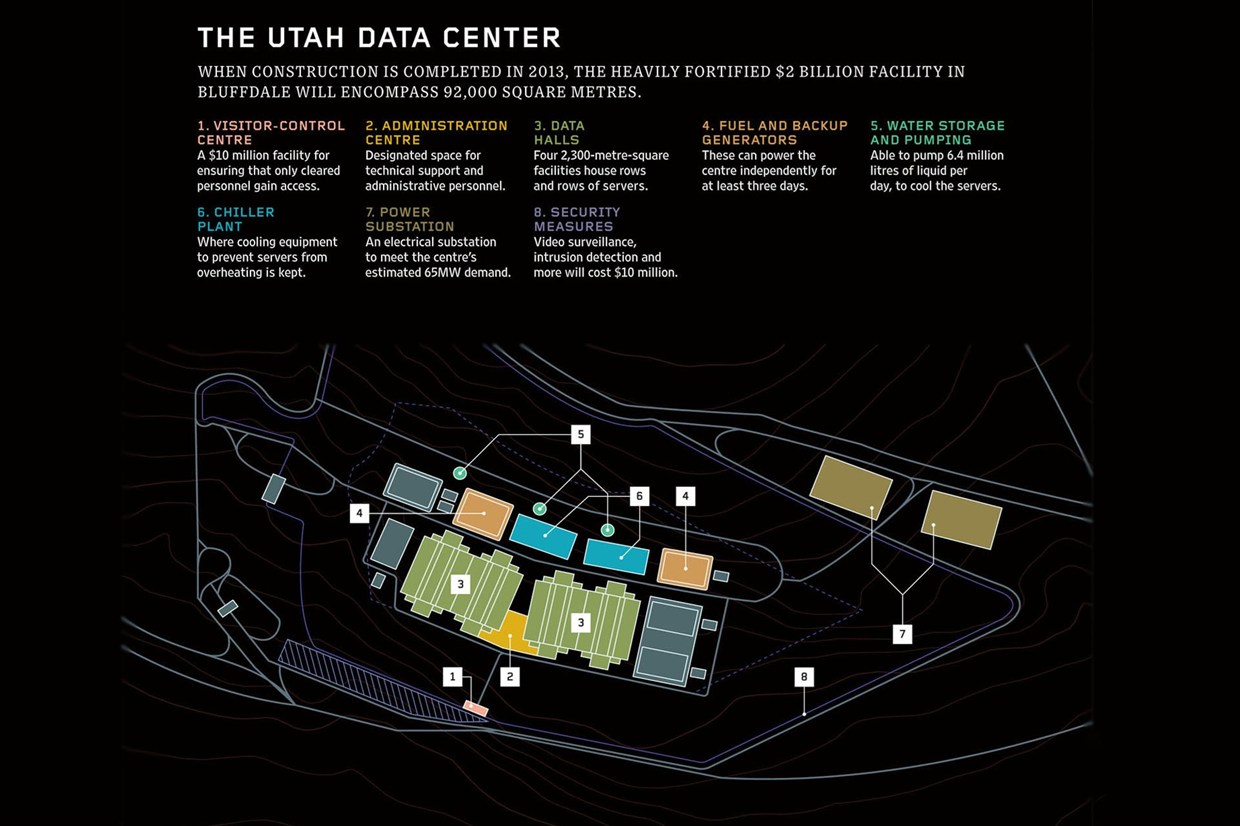

Within days, the gold shovels would be gone and Inglis and the generals would be replaced by some 10,000 builders. The plans for the centre show an extensive security system: an elaborate $10 million (£6m) anti-terrorism protection programme, including a fence designed to stop a heavy vehicle travelling 80kph, closed-circuit cameras, a biometric identification system, a vehicle-inspection facility and a visitor-control centre. Inside, the facility will consist of four 2,300-square-metre halls filled with servers, complete with raised floor space for cables and storage. In addition, there will be more than 83,600 square metres for technical support and administration. The entire site will be self-sustaining, with fuel tanks large enough to power the backup generators for three days in an emergency, water storage with the capability of pumping 6.4 million litres of liquid per day, as well as a sewage system and air-conditioning system to keep all those servers cool.

Electricity will come from the centre's own substation built by Rocky Mountain Power to satisfy the 65-megawatt power demand. Such a mammoth amount of energy comes with a mammoth price tag — about $40 million (£25 million) a year, according to one estimate.

Given the facility's scale and the fact that a terabyte of data can now be stored on a flash drive the size of your little finger, the amount of information that could be housed in Bluffdale is staggering. But so is the exponential growth in the amount of intelligence data being produced every day by the sensors of the intelligence agencies. As a result of this "expanding array of theatre airborne and other sensor networks", as a 2007 Department of Defense report puts it, the Pentagon is trying to expand its worldwide communications network, known as the Global Information Grid, to handle yottabytes [Each character on this page is a byte of data. A kilobyte is 103 or 1000 bytes (1024 bytes to be exact). A million bytes, a megabyte, is 1000x1000 bytes or 106 bytes. 10 terabytes (where a terabyte is 1012 bytes) would hold the entire print collection of the Library of Congress. A yottabyte is 1024 bytes — so large that no one has yet coined a term for the next higher magnitude.] It needs that capacity because, according to a report by Cisco, global internet traffic will quadruple from 2010 to 2015, reaching 966 exabytes per year. [An exabyte is 1018 bytes and a million exabytes equal a yottabyte.] Eric Schmidt, Google's[7] former CEO, once estimated that all human knowledge created from the dawn of man to 2003 totalled five exabytes. And the flow shows no sign of slowing. In 2011 more than two billion of the world's 6.9 billion people were connected to the internet. By 2015, market research firm IDC estimates, there will be 2.7 billion users. Thus, the NSA's need for a 93,000-square-metre data storehouse. Should the agency ever fill the Utah centre with a yottabyte of information, it would be equal to about 500 quintillion (500,000,000,000,000,000,000) pages of text.

The data stored in Bluffdale will go far beyond the world's billions of public web pages. The NSA is more interested in the invisible web, also known as the deep web or deepnet — data beyond the reach of the public. This includes password[8]-protected data, US and foreign government communications, and non-commercial file-sharing between trusted peers. "The deep web contains government reports, databases and other sources of information of high value to DoD and the intelligence community," according to a 2010 Defense Science Board report. "Tools are needed to find and index data in the deep web... Stealing the classified secrets of a potential adversary is where the [intelligence] community is most comfortable."

With its new Utah Data Center, the NSA will at last have the capability to store, and rummage through, all those stolen secrets. The question, of course, is how the agency defines who is, and who is not, "a potential adversary".

Before yottabytes of data can begin piling up inside the servers of the NSA's new centre, they must be collected. To achieve that more efficiently, the agency has installed secret electronic-monitoring rooms in major US telecom facilities. These are where the agency taps into the US communications networks, a practice that came to light during the Bush years[9] but was never acknowledged by the agency. The broad outlines of the so-called warrantless-wiretapping programme have long been exposed — how the NSA secretly and illegally bypassed the Foreign Intelligence Surveillance Court, which was supposed to oversee and authorise highly targeted domestic eavesdropping; how the programme allowed monitoring of millions of American phone calls and email. In the wake of the programme's exposure, Congress passed the FISA Amendments Act of 2008, which largely made the practices legal. Telecoms that had agreed to participate in the illegal activity were granted immunity from prosecution and lawsuits. What hasn't been revealed until now, however, was the size of this domestic spying programme.

For the first time, a former NSA official has gone on the record to describe the programme, codenamed Stellar Wind, in detail. William Binney was a senior crypto-mathematician responsible for automating the agency's worldwide listening network. A tall man with dark, determined eyes behind thick-rimmed glasses, the 68-year-old spent nearly four decades breaking codes and finding new ways to channel billions of private phone calls and email messages from around the world into the NSA's bulging databases. As chief and one of the two cofounders of the agency's Signals Intelligence Automation Research Center, Binney and his team designed much of the infrastructure that's still probably in use.

He explains that the agency could have installed its gear at the nation's cable landing stations — the two dozen or so sites where fibre-optic cables come ashore. If it had, the NSA could have limited its eavesdropping to international communications, which at that time was all that was allowed under US law. Instead it put wiretapping rooms at key junctions throughout the country, thus gaining access to most of the domestic traffic. The network of intercept stations, or "switches", goes far beyond the room in an AT&T building in San Francisco exposed by a whistleblower in 2006. "I think there's ten to 20 of them," Binney says. "Not just San Francisco; they have them in the middle of the country and on the East Coast."

Listening in doesn't stop at the telecom switches. To capture satellite communications, the agency also monitors AT&T's powerful earth stations, satellite receivers in locations that include Roaring Creek and Salt Creek. Tucked away in rural Pennsylvania, Roaring Creek's three 32-metre dishes handle much of the country's communications to and from Europe and the Middle East. And on a remote stretch in Arbuckle, California, three similar dishes at the company's Salt Creek station service the Pacific Rim and Asia.

Binney left the NSA in late 2001, shortly after the agency launched its warrantless-wiretapping programme. "They violated the [US] Constitution setting it up," he says. "But they didn't care. They were going to do it, and they were going to crucify anyone who stood in the way. When they started violating the Constitution, I couldn't stay." Binney says Stellar Wind was larger than has been disclosed and included listening to domestic phone calls as well as inspecting domestic email.[10] At the outset the programme recorded 320 million calls a day, he says — about 73 to 80 per cent of the total volume of the agency's worldwide intercepts.

The haul only grew. According to Binney — who has kept close contact with agency employees until a few years ago — the taps in the secret rooms dotting the country are powered by software programs that conduct "deep packet inspection", examining internet traffic as it passes through the ten-gigabit-per-second cables at the speed of light.

The software, created by a company called Narus that's now part of Boeing,[11] is controlled from NSA headquarters at Fort Meade in Maryland and searches US sources for addresses, locations, countries and phone numbers, as well as watch-listed names, keywords and phrases in emails. Any communication that arouses suspicion, especially those to or from the million or so people on agency watch lists, is recorded and transmitted to the NSA. The scope expands from there, Binney says. Once a name is entered into the Narus database, all communications to and from that person are routed to the NSA's recorders. "If your number's in there? Routed and gets recorded." And when Bluffdale is completed, whatever is collected will be routed there.

According to Binney, one of the deepest secrets of the Stellar Wind programme — again, never confirmed until now — was that the NSA gained warrantless access to AT&T's domestic and international billing records. As of 2007, AT&T had more than 2.8 trillion records in a database at its Florham Park, New Jersey, complex. Verizon was also part of the programme. "That multiplies the call rate by at least a factor of five," Binney says. "So you're over a billion and a half calls a day." (Verizon and AT&T said they would not comment on matters of national security.)

After he left the NSA, Binney suggested a system for monitoring people's communications according to how closely they are connected to a target. The further away from the target — say just an acquaintance of a friend of the target — the less the surveillance. But the agency rejected the idea, and, given the massive new storage facility in Utah, Binney suspects that it now simply collects everything. He says: "They're storing everything they gather."

Once communications are stored, the datamining begins. "You can watch everybody all the time with datamining," Binney says. Everything a person does is charted on a graph, "financial transactions or travel or anything", he says. Thus the NSA is able to paint a detailed picture of someone's life. The NSA can also eavesdrop[12] on phone calls directly and in real time. According to Adrienne Kinne, who worked before and after 9/11 as a voice interceptor at the NSA facility in Georgia, in the wake of the World Trade Center attacks "basically all rules were thrown out the window, and they would use any excuse to justify a waiver to spy on Americans". Even journalists calling home from overseas were included. "A lot of time you could tell they were calling their families," she says. "Intimate, personal conversations." Kinne found eavesdropping on innocent citizens distressing. "It's like finding somebody's diary," she says.

But there is reason for everyone to be distressed about the practice. Once the door is open for the government to spy on US citizens, there are temptations to abuse that power for political purposes, as when Richard Nixon eavesdropped on his political enemies during Watergate and ordered the NSA to spy on anti-war protesters. Those and other abuses prompted Congress to enact prohibitions in the mid-1970s against domestic spying.[13]

Before he left the NSA, Binney tried to persuade officials to create a more targeted system that could be authorised by a court. At the time, the agency had 72 hours to obtain a legal warrant; Binney devised a method to computerise the system. But such a system would have required close co-ordination with the courts, and NSA officials weren't interested, Binney says. Asked how many communications — "transactions", in NSA's lingo — the agency has intercepted since 9/11, Binney estimates "between 15 and 20 trillion over 11 years".

Binney hoped that Barack Obama's new administration might be open to addressing constitutional concerns. He and another former senior NSA analyst, J Kirk Wiebe, tried to explain an automated warrant-approval system to the Department of Justice's inspector general. They were given the brush-off. "They said, oh, OK, we can't comment," Binney says. Sitting in a restaurant not far from NSA headquarters, the place where he spent nearly 40 years of his life, Binney held his thumb and forefinger close together. "We are, like, that far from a turnkey totalitarian state," he says.

There is still one technology preventing untrammeled government access to private digital data: strong encryption. Anyone — from terrorists[14] and weapons dealers to corporations, financial institutions and ordinary email senders — can use it to seal their messages, plans, photos and documents in hardened data shells. For years, one of the hardest shells has been the Advanced Encryption Standard (AES), one of several algorithms used by much of the world to encrypt data. Available in three different strengths — 128 bits, 192 bits and 256 bits — it's incorporated in most commercial email programs and web browsers and is considered so strong that the NSA has even approved its use for top-secret US government communications. Most experts say that a so-called brute-force computer attack on the algorithm — trying one combination after another to unlock the encryption — would likely take longer than the age of the universe. For a 128-bit cipher, the number of trial-and-error attempts would be 340 undecillion (1036).

Breaking into those complex mathematical shells like the AES is one of the key reasons for the construction going on in Bluffdale. That kind of cryptanalysis requires two major ingredients: super-fast computers[15] to conduct brute-force attacks on encrypted messages and a massive number of those messages for the computers to analyse. The more messages from a given target, the more likely it is for the computers to detect telltale patterns, and Bluffdale will be able to hold a great many messages. "We questioned it one time," says another source, a senior intelligence manager who was also involved with the planning. "Why were we building this NSA facility? And, boy, they rolled out all the old guys — the crypto guys." According to the official, these experts told then-director of national intelligence Dennis Blair, "You've got to build this thing because we just don't have the capability of doing the code-breaking." It was a candid admission. In the long war between the code breakers and the code makers — the tens of thousands of cryptographers in the worldwide computer-security industry — the code breakers were admitting defeat.

So the agency had one major ingredient — a massive data-storage facility — under way. Meanwhile, across the country in Tennessee, the US government was working in utmost secrecy on the other vital element: the most powerful computer the world has ever known.The plan was launched in 2004 as a modern-day Manhattan Project. Dubbed the High Productivity Computing Systems programme, its goal was to advance computer speed a thousandfold, creating a machine that could execute a quadrillion (1015) operations a second, known as a petaflop — the computer equivalent of breaking the land speed record. And as with the Manhattan Project, the venue chosen for the supercomputing programme was the town of Oak Ridge in eastern Tennessee, a rural area where sharp ridges give way to low, scattered hills, and the southwestward-flowing Clinch River bends sharply to the southeast. About 40km from Knoxville, it is the "secret city" where uranium-235 was extracted for the first atomic bomb. A sign near the exit said: "What you see here, what you do here, what you hear here, when you leave here, let it stay here." Today, not far from where that sign stood, Oak Ridge is home to the Department of Energy's Oak Ridge National Laboratory, and it's engaged in a new secret war.

In 2004, as part of the supercomputing programme, the Department of Energy established its Oak Ridge Leadership Computing Facility for multiple agencies to join forces on the project. But in reality there would be two tracks, one unclassified, in which all of the scientific work would be public, and another top secret,[16] in which the NSA could pursue its own computer covertly. "For our purposes, they had to create a separate facility," says a former senior NSA computer expert who worked on the project and is still associated with the agency. (He is one of three sources who described the programme.) It was an expensive undertaking.

Known as the Multiprogram Research Facility, or Building 5300, the $41 million, five-storey, 20,000m2 structure was built on a plot of land on the lab's East Campus and completed in 2006. Inside, 318 scientists, computer engineers and other staff work in secret on the cryptanalytic applications of high-speed computing and other classified projects. The centre was named in honour of George R Cotter, the NSA's now-retired chief scientist and head of its information technology programme. Not that you'd know it. "There's no sign on the door," says the ex-NSA computer expert.

At the DOE's unclassified centre at Oak Ridge the team had its Cray XT4 supercomputer upgraded to a warehouse-sized XT5. Named Jaguar for its speed, it clocked in at 1.75 petaflops and was the world's fastest computer in 2009.

Meanwhile, over in Building 5300, the NSA succeeded in building an even faster supercomputer. "They made a big breakthrough," says another former senior intelligence official, who helped oversee the programme.

The NSA's machine was probably similar to the unclassified Jaguar, but it was much faster out of the gate, modified specifically for cryptanalysis and targeted against one or more specific algorithms,[17] like the AES. They were moving from the R&D phase to actually attacking extremely difficult encryption systems.

The codebreaking effort was up and running.

The agency pulled the shade down on the project, says the former official. "Only the chairman, vice chairman and the two staff directors of each intelligence committee were told," he says. "They were thinking this was going to give them the ability to crack current public encryption."

In addition to giving the NSA access to a tremendous amount of Americans' personal data, such an advance would also open a window on a trove of foreign secrets. Whereas today most sensitive communications use the strongest encryption, much of the older data stored by the NSA, including a great deal of what will be transferred to Bluffdale once the centre is complete, is encrypted with more vulnerable ciphers. "Remember," says the former intelligence official, "a lot of foreign government stuff we've never been able to break is 128[-bit] or less. Break all that and you'll find out a lot more of what you didn't know — stuff we've already stored — so there's an enormous amount of information still in there."

That, he notes, is where the value of Bluffdale and its mountains of long-stored data will come in. What can't be broken today may be broken tomorrow. "Then you can see what they were saying in the past," he says. "By extrapolating the way they did business, it gives us an indication of how they may do things now." The danger, the former official says, is that it's not only foreign government information that is locked in weaker algorithms; it's also a great deal of personal domestic communications, such as Americans' email intercepted by the NSA in the past decade.

But first the supercomputer must break the encryption, and to do that, speed is everything. The faster the computer, the faster it can break codes. The Data Encryption Standard, the 56-bit predecessor to the AES, debuted in 1976 and lasted about 25 years. The AES made its first appearance in 2001 and is expected to remain strong and durable for at least a decade. But if the NSA has secretly built a computer that is considerably faster than machines in the unclassified arena, then the agency has a chance of breaking the AES in a much shorter time. And with Bluffdale in operation, the NSA will have the luxury of storing an ever-expanding archive of intercepts until that breakthrough comes along.

But despite its progress, the agency has not finished building at Oak Ridge, nor is it satisfied with breaking the petaflop barrier. Its next goal is to reach exaflop speed, one quintillion (1018) operations a second, and eventually zettaflop (1021) and yottaflop.

These goals have considerable support in Congress. Last November a bipartisan group of 24 senators sent a letter to President Obama urging him to approve continued funding through 2013 for the Department of Energy's exascale computing initiative (the NSA's budget requests are classified). They cited the necessity to keep up with and surpass China and Japan. "The race is on to develop exascale computing capabilities," the senators noted. By late 2011 the Jaguar (now with a peak speed of 2.33 petaflops) ranked third behind Japan's "K Computer", with 10.51 petaflops, and the Chinese Tianhe-1A system, with 2.57 petaflops.

But the real competition will take place in the classified realm. To secretly develop the new exaflop (or higher) machine by 2018, the NSA has proposed constructing two connecting buildings, totalling 24,100m2, near its current facility on the East Campus of Oak Ridge. Called the Multiprogram Computational Data Center, the buildings will be low and wide like giant warehouses, a design necessary for the dozens of computer cabinets that will compose an exaflop-scale machine, possibly arranged in a cluster to minimise the distance between circuits. According to a presentation delivered to DoE employees in 2009, it will be an "unassuming facility with limited view from roads", in keeping with the NSA secrecy. And it will have an extraordinary appetite for electricity, using about 200 megawatts, enough to power 200,000 homes. In the meantime Cray is working on the next step for the NSA, funded in part by a $250 million contract with the Defense Advanced Research Projects Agency. It's a massively parallel supercomputer called Cascade, a prototype of which is due at the end of 2012. Its development will run alongside the unclassified effort for the DoE and other partner agencies. That project, due in 2013, will upgrade the JaguarXT5 into an XK6, codenamed Titan, upping its speed to ten to 20 petaflops.

Yottabytes and exaflops, septillions and undecillions — the race for computing speed and data storage goes on. In his 1941 story The Library of Babel, Jorge Luis Borges imagined a collection of information where the entire world's knowledge is stored but barely a single word is understood. In Bluffdale the NSA is constructing a library on a scale that even Borges might not have contemplated. And to hear the masters of the agency tell it, it's only a matter of time until every word is illuminated.

Footnotes

[1] http://en.wikipedia.org/wiki/Wasatch_Range

[2] http://www.wired.co.uk/news/archive/2010-04/29/erasing-david-review

[3] http://www.wired.co.uk/reviews/mobile-phones

[4] http://www.wired.co.uk/news/archive/2011-09/12/how-to-beat-terrorism

[5] http://www.wired.co.uk/magazine/archive/2012/01/start/from-russia-with-uranium

[6] http://www.wired.co.uk/news/archive/2012-02/20/anti-terror-plans

[7] http://www.wired.co.uk/news/archive/2012-02/20/anti-terror-plans

[8] http://www.wired.co.uk/news/archive/2012-02/20/anti-terror-plans

[9] http://www.wired.co.uk/news/archive/2012-02/20/anti-terror-plans

[10] http://www.wired.co.uk/news/archive/2012-02/20/anti-terror-plans

[11] http://www.wired.co.uk/news/archive/2012-02/20/anti-terror-plans

[12] http://www.wired.co.uk/news/archive/2012-02/20/anti-terror-plans

[13] http://www.wired.co.uk/news/archive/2012-02/20/anti-terror-plans

[14] http://www.wired.co.uk/news/archive/2011-10/13/hope-bomb-plot-true

[15] http://www.wired.co.uk/news/archive/2011-12/12/meet-gordon

[16] http://www.wired.c.uk/magazine/archive/2011/03/features/nuclear-island

[17] http://www.wired.co.uk/news/archive/2012-01/06/big-data-evolution-and-missiles

James Bamford is author of The Shadow Factory: The Ultra-Secret NSA from 9/11 to the Eavesdropping on America (Anchor Books)

This article was edited by Dan Smith.

It appeared March 30, 2012 in Wired (UK) and is archived at

http://www.wired.co.uk/magazine/archive/2012/05/features/the-black-box